So far, I’ve presented the following topics:

- How to install Cilium on Azure Kubernetes Service ( cilium install command)

https://cloud-cod.com/index.php/2026/02/16/azure-aks-byo-cni-with-cilium/ - How to enable Hubble and verify L7 Cilium Network Policies are enforced

https://cloud-cod.com/index.php/2026/03/03/end-to-end-l7-visibility-with-cilium-hubble/ - How to install Cilium on Azure Kubernetes Service using Helm

https://cloud-cod.com/index.php/2026/03/09/aks-cilium-installation-with-helm/

Now, it’s time to show how to fully replace kube-proxy. I’ll use my AKS cluster with Cilium (deployed with Helm).

Table of Contents

Validation

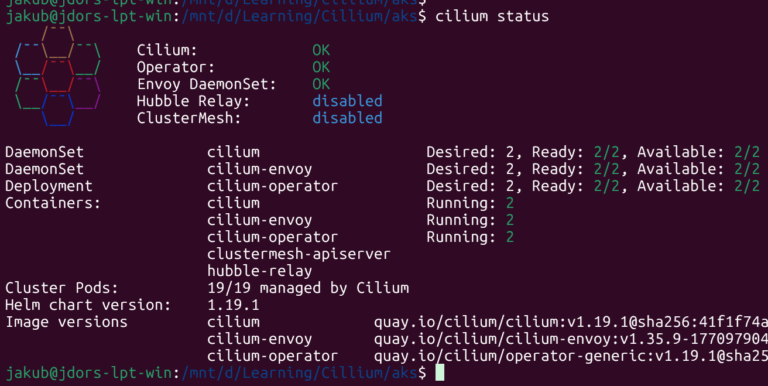

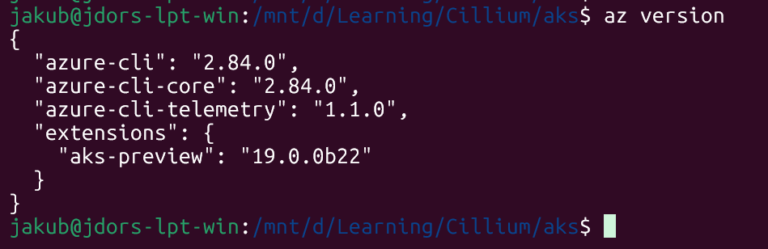

My Cluster is running Cilium 1.19.1 that I’ve installed using Helm:

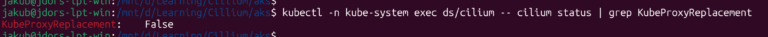

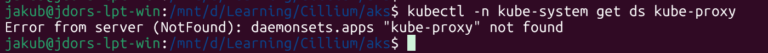

Let’s confirm that kube‑proxy is still running and that Cilium has not taken over yet.

# kube-proxy DaemonSet on AKS

kubectl -n kube-system get ds kube-proxy

# Cilium status from any agent pod

kubectl -n kube-system exec ds/cilium -- cilium status | grep KubeProxyReplacement

And now VERY important thing:

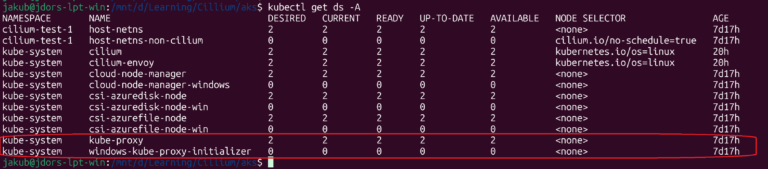

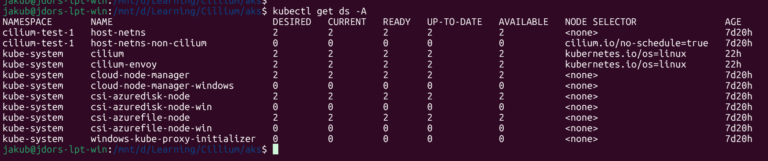

kube-proxyis the actual Linux Kube‑Proxy DaemonSet we want to get rid of (AKS will manage this based on the Kube‑Proxy config).windows-kube-proxy-initializeris a Windows helper DaemonSet that confuses Cilium’s kube‑proxy detection because its name containskube-proxy, even though it’s not doing anything on Linux nodes.

In the current state, Cilium is only acting as the CNI and datapath, while kube‑proxy is still responsible for implementing most Kubernetes Service routing.

Some aspects of traffic handling (for example basic ClusterIP load‑balancing) already pass through Cilium’s eBPF datapath, but kube‑proxy still programs the Service rules, so it owns how Services like NodePort and LoadBalancer are routed

Kube-Proxy Removal

On AKS you cannot permanently remove kube‑proxy just by deleting the DaemonSet. AKS may recreate it. The supported way is to update the cluster’s kube‑proxy configuration and disable it.

Registering aks-preview

The --kube-proxy-config flag we’ll use is preview-only. We have to install the aks-preview extension:

az extension add --name aks-preview

az extension update --name aks-preview

az feature register \

--namespace "Microsoft.ContainerService" \

--name "KubeProxyConfigurationPreview"

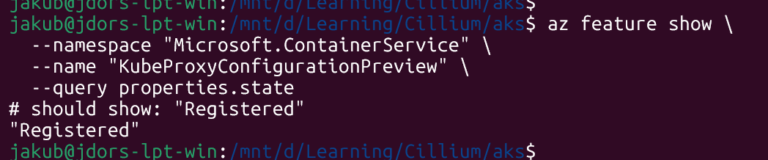

az feature show \

--namespace "Microsoft.ContainerService" \

--name "KubeProxyConfigurationPreview" \

--query properties.state

The “az feature show” command output must show Registred.

Removing Kube-Proxy

The following variables specify my Cluster details:

RESOURCE_GROUP=<cluster-resource-group>

CLUSTER_NAME=<cluster-aks-name>

Let’s create the minimal config file named kube-proxy-disabled.json

{

"enabled": false

}

Azure AZ CLI 2.59.0 or newer is required.

Apply the config file to the Cluster:

az aks update \

-g $RESOURCE_GROUP \

-n $CLUSTER_NAME \

--kube-proxy-config kube-proxy-disabled.json

Example of the desired output:

Verification (DeamonSet is not there):

kubectl -n kube-system get ds kube-proxy

Now the control plane no longer runs kube‑proxy on your nodes.

Enable Cilium Kube-Proxy (via Helm)

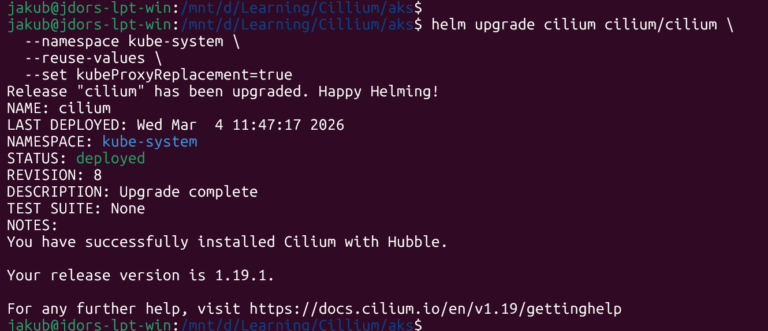

I already have Cilium installed with Helm – 1.19.1. Now I will switch it into full kube‑proxy replacement mode.

We can now upgrade Cilium using Helm:

helm upgrade cilium cilium/cilium \

--namespace kube-system \

--reuse-values \

--set kubeProxyReplacement=true

The output:

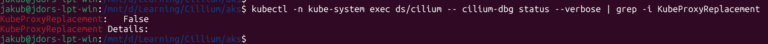

What’s interesting and unexpected, if you check whether Cilium has taken over the kube-proxy function.. the output shows IT HAS NOT:

kubectl -n kube-system exec ds/cilium -- cilium-dbg status --verbose | grep -i KubeProxyReplacement

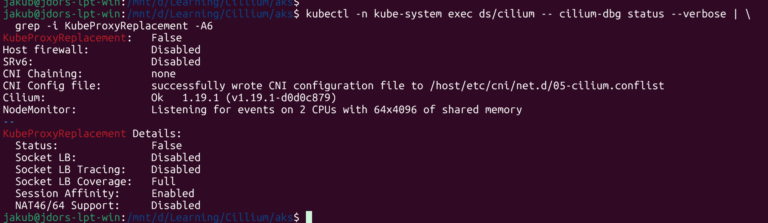

kubectl -n kube-system exec ds/cilium -- cilium-dbg status --verbose | \

grep -i KubeProxyReplacement -A6

Why is that????

AKS and Cilium Kube-Proxy Replacement Issue / Bug

On my AKS BYOCNI cluster, Cilium still reports KubeProxyReplacement: False even though I disabled kube-proxy via --kube-proxy-config and set kubeProxyReplacement=true in the Helm values.

The reason is that AKS now deploys an internal DaemonSet called windows-kube-proxy-initializer in the kube-system namespace, and its name contains the string kube-proxy.

Cilium’s detection logic simply scans all DaemonSets in kube-system and, if it finds any whose name contains kube-proxy, it assumes kube-proxy is still installed and refuses to enable kube-proxy replacement.

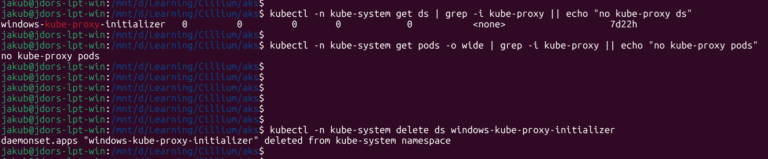

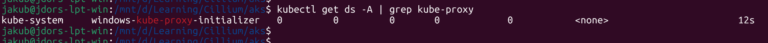

Simply deleting the windows-kube-proxy-initializer DaemonSet is not a real fix, because it is managed by the AKS control plane and is automatically recreated after deletion. As a result, Cilium will always detect a kube-proxy-named DaemonSet on this cluster and will never switch KubeProxyReplacement to True, even though the Linux kube-proxy has been disabled.

kubectl -n kube-system delete ds windows-kube-proxy-initializer

The windows-kube-proxy-initializer comes back within a minute:

kubectl get ds -A | grep kube-proxy

Conclusions

This interaction between Cilium’s detection logic and the AKS-managed windows-kube-proxy-initializer DaemonSet is currently tracked as a bug/limitation in AKS and in the Cilium issue tracker. The net effect for BYOCNI clusters is that full kube-proxy replacement with Cilium is not reliably achievable on AKS at the moment, unless Microsoft changes how this DaemonSet is deployed or Cilium adopts a different detection strategy.