In my previous article https://cloud-cod.com/index.php/2026/03/11/running-kind-on-aws-ec2/ , I showed how to run a multi‑node Kind cluster on an Ubuntu EC2 instance. In this post, we go one step further:

- we install Cilium as the CNI

- and enable its eBPF‑based kube‑proxy replacement so that Cilium handles all Kubernetes Service traffic, including ClusterIP and NodePort.

We will then deploy a simple nginx application and expose it via NodePort and Kind’s port mappings, effectively simulating a LoadBalancer from the outside world.

Table of Contents

Prerequisites

You should already have:

An AWS EC2 instance (Ubuntu 24.04 LTS recommended) with Docker, kubectl, and Kind installed.

A running Kind cluster created with default CNI disabled

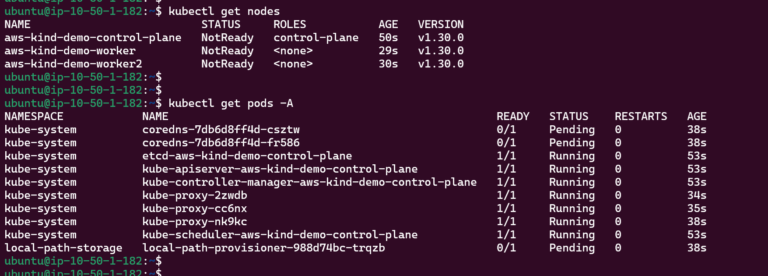

Right now, my Kind cluster on EC2 is up, but all nodes are in NotReady and several system pods are stuck in Pending. The core control-plane components and kube-proxy are running, but both coredns and the local-path-provisioner remain Pending because there is no CNI plugin installed yet. This is the exact starting point from which we will remove kube-proxy, install Cilium as the CNI, and enable its kube-proxy replacement.

Kube-Proxy Removal

Cilium’s kube‑proxy replacement expects kube‑proxy to be absent, otherwise you have two components trying to program Service rules. Kind deploys kube‑proxy by default, so we must remove its DaemonSet.

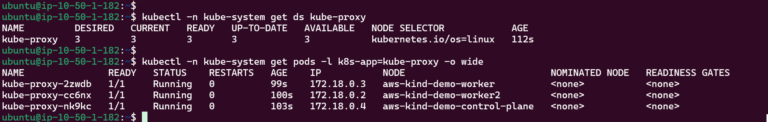

kubectl -n kube-system get ds kube-proxy

kubectl -n kube-system get pods -l k8s-app=kube-proxy -o wide

Delete the DaemonSet:

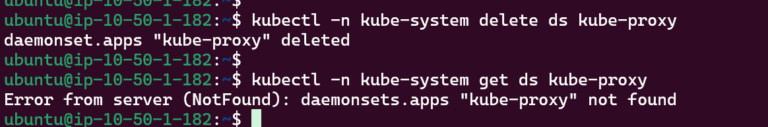

kubectl -n kube-system delete ds kube-proxy

On a “real” bare‑metal cluster you would also need to clean up kube‑proxy’s iptables rules, but in Kind these rules are isolated to the containerized nodes and will be overwritten when Cilium programs its own eBPF datapath.

Cilium Helm Repo Installation

We will use Helm to install Cilium and explicitly enable kube‑proxy replacement.

Helm installation:

HELM_VERSION=v3.15.0

curl -LO https://get.helm.sh/helm-${HELM_VERSION}-linux-amd64.tar.gz

tar -xzf helm-${HELM_VERSION}-linux-amd64.tar.gz

sudo mv linux-amd64/helm /usr/local/bin/helm

rm -rf linux-amd64 helm-${HELM_VERSION}-linux-amd64.tar.gz

helm version

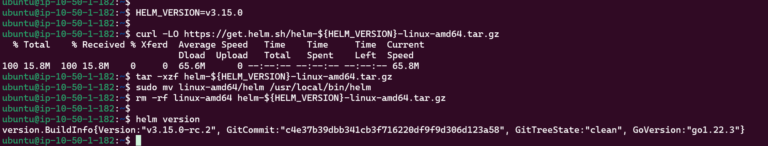

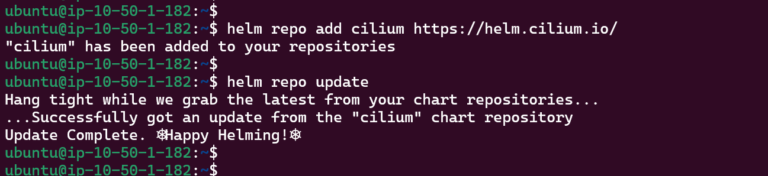

Add the Cilium Helm repo:

helm repo add cilium https://helm.cilium.io/

helm repo update

Cilium Installation

Cilium needs to know where to reach the Kubernetes API server when it runs without kube‑proxy:

API_SERVER_IP=$(kubectl get endpoints kubernetes -o jsonpath='{.subsets[0].addresses[0].ip}')

API_SERVER_PORT=$(kubectl get endpoints kubernetes -o jsonpath='{.subsets[0].ports[0].port}')

echo "$API_SERVER_IP $API_SERVER_PORT"

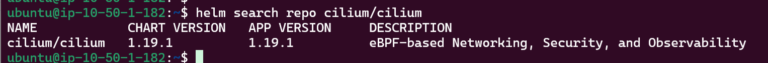

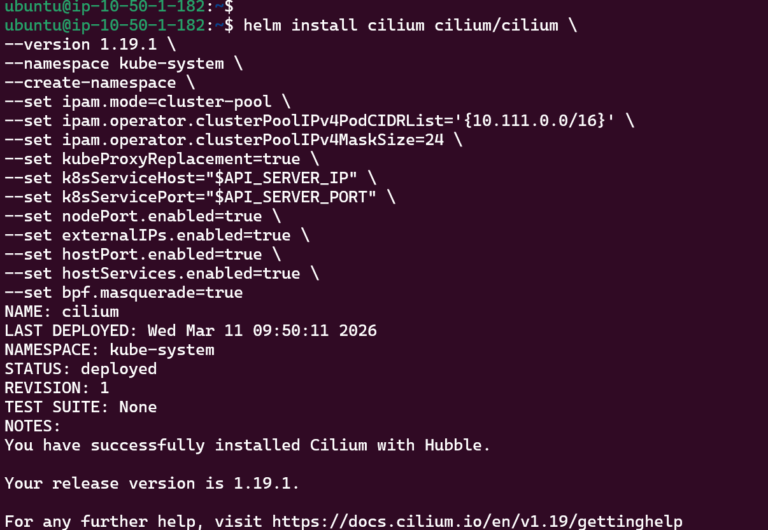

Install Cilium with kubeProxyReplacement=true :

helm install cilium cilium/cilium \

--version 1.19.1 \

--namespace kube-system \

--create-namespace \

--set ipam.mode=cluster-pool \

--set ipam.operator.clusterPoolIPv4PodCIDRList='{10.111.0.0/16}' \

--set ipam.operator.clusterPoolIPv4MaskSize=24 \

--set kubeProxyReplacement=true \

--set k8sServiceHost="$API_SERVER_IP" \

--set k8sServicePort="$API_SERVER_PORT" \

--set nodePort.enabled=true \

--set externalIPs.enabled=true \

--set hostPort.enabled=true \

--set hostServices.enabled=true \

--set bpf.masquerade=true

Key flags:

ipam.mode=cluster-poolwithclusterPoolIPv4PodCIDRListmatches the pod subnet configured in Kind.kubeProxyReplacement=trueactivates Cilium’s eBPF‑based implementation of Service load balancing.k8sServiceHostandk8sServicePorttell Cilium where to reach the API server without relying on kube‑proxy.nodePort.enabled=trueenables NodePort support in Cilium.bpf.masquerade=trueallows Cilium to perform BPF‑based masquerading for traffic leaving the cluster.

In a real production environment, you would typically use type: LoadBalancer Services backed by Cilium’s native load balancer integration, which requires additional configuration such as defining CiliumLoadBalancerIPPool resources and, optionally, BGP or L2 announcement to advertise those IPs externally. This article focuses on a simpler lab setup where we simulate a LoadBalancer using a Cilium‑backed NodePort Service combined with Kind’s host port mappings on the EC2 instance.

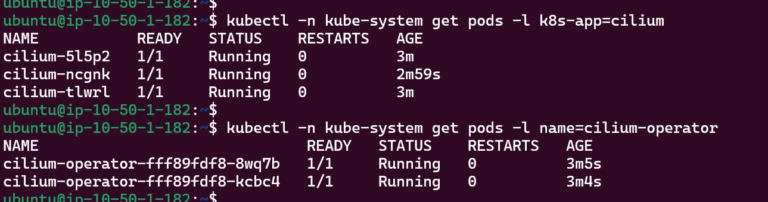

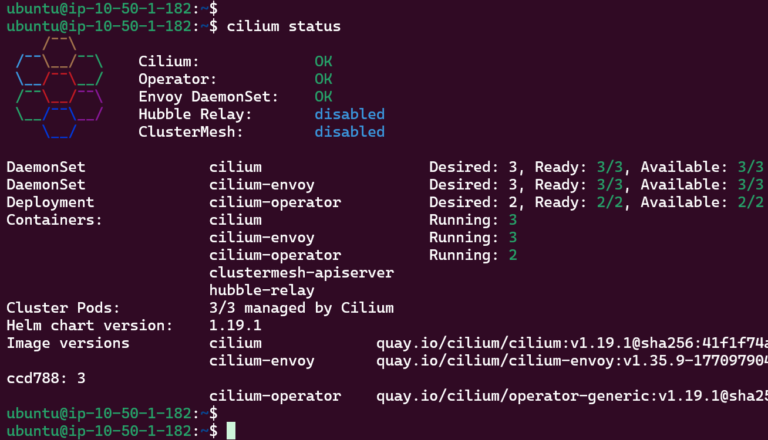

Run the following commands to verify the Cilium installation:

kubectl -n kube-system get pods -l k8s-app=cilium

kubectl -n kube-system get pods -l name=cilium-operator

Cilium CLI Installation

We don’t have Cilium CLI installed. Let’s fix it:

CILIUM_CLI_VERSION=$(curl -s https://raw.githubusercontent.com/cilium/cilium-cli/main/stable.txt)

CLI_ARCH=amd64

curl -L --fail --remote-name-all \

https://github.com/cilium/cilium-cli/releases/download/${CILIUM_CLI_VERSION}/cilium-linux-${CLI_ARCH}.tar.gz{,.sha256sum}

sha256sum --check cilium-linux-${CLI_ARCH}.tar.gz.sha256sum

sudo tar xzvf cilium-linux-${CLI_ARCH}.tar.gz -C /usr/local/bin

rm cilium-linux-${CLI_ARCH}.tar.gz{,.sha256sum}

We can check Cilium status now:

Kube-Proxy Replacement Verification

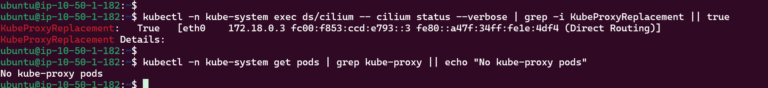

Let’s double-check that Kube-Proxy has been replaced by Cilium:

kubectl -n kube-system exec ds/cilium -- cilium status --verbose | grep -i KubeProxyReplacement || true

kubectl -n kube-system get pods | grep kube-proxy || echo "No kube-proxy pods"

This confirms that kube-proxy has been successfully removed and Cilium’s eBPF data plane is now providing Service load balancing for ClusterIP and NodePort traffic on the Kind cluster.

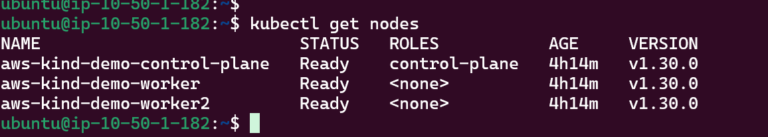

All the Nodes are now “Ready”:

Deploy Test Nginx App

Now we can deploy a simple nginx application and expose it via NodePort. Thanks to the port mappings in Kind, traffic will flow from the EC2 host’s port 80 into the NodePort inside the cluster.

kubectl create namespace demo

kubectl -n demo create deployment nginx \

--image=nginx:stable-alpine \

--port=80

kubectl -n demo expose deployment nginx \

--type=NodePort \

--port=80 \

--target-port=80 \

--name=nginx-svc

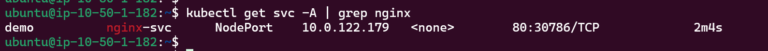

kubectl -n demo get svc nginx-svc

By default, Kubernetes will pick a random NodePort in the 30000–32767 range. We want to align it with the Kind port mapping (30080).

kubectl get svc -A | grep nginx

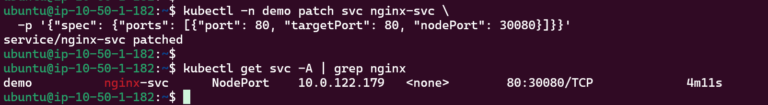

Patch the service:

kubectl -n demo patch svc nginx-svc \

-p '{"spec": {"ports": [{"port": 80, "targetPort": 80, "nodePort": 30080}]}}'

Cilium’s eBPF datapath will now handle all load-balancing for this Service instead of kube‑proxy.

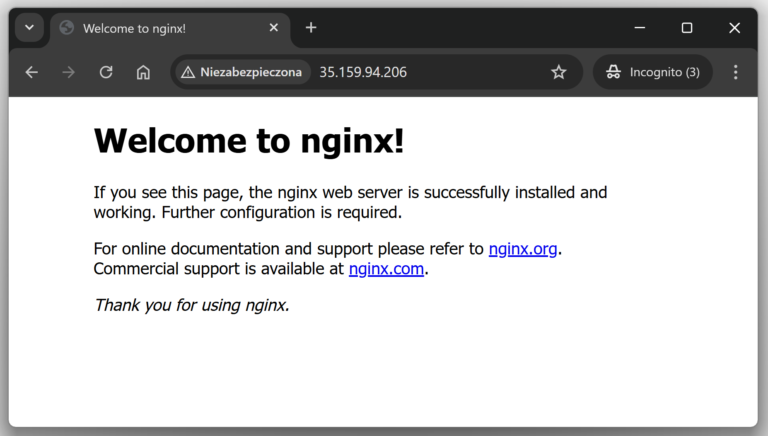

Test #1 - HTTP Access from Outside

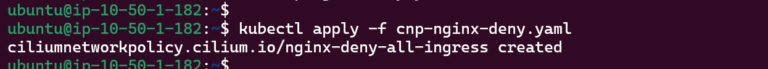

To demonstrate that Cilium’s eBPF data plane is actually enforcing traffic policies, I created a simple CiliumNetworkPolicy that denies all ingress to the nginx pods in the demo namespace. File: cnp-nginx-deny.yaml

apiVersion: cilium.io/v2

kind: CiliumNetworkPolicy

metadata:

name: nginx-deny-all-ingress

namespace: demo

spec:

description: "Deny all ingress traffic to nginx pods in the demo namespace"

endpointSelector:

matchLabels:

app: nginx

ingress: []

Apply:

kubectl apply -f cnp-nginx-deny.yaml

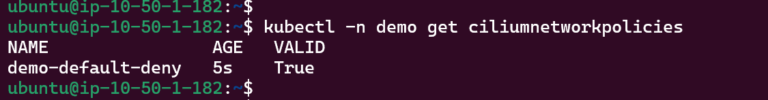

Verify the policy has been applied:

kubectl -n demo get ciliumnetworkpolicies

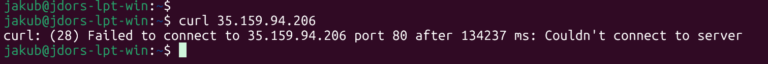

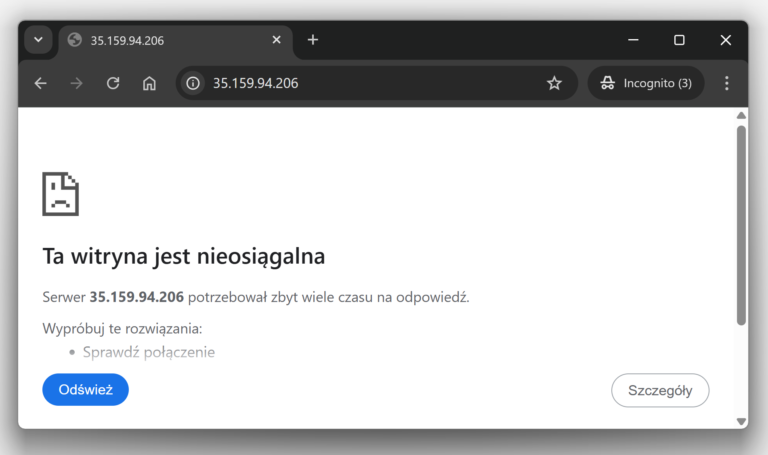

After applying this policy, the curl and “web-browser” test that previously reached nginx now times out, even though the NodePort Service and Kind port mappings are still configured. This shows that Cilium’s eBPF-based policy engine is blocking the traffic before it ever reaches the nginx pods.

Conclusions

Cilium can fully replace kube‑proxy and handle all Kubernetes Service traffic: ClusterIP, NodePort, and our simulated LoadBalance, directly in eBPF, without relying on iptables. With Kind running on EC2, you get a safe lab where you can remove kube‑proxy, enable Cilium’s kube‑proxy replacement, and then prove it’s really in control by exposing nginx over NodePort and later blocking that same traffic with CiliumNetworkPolicies.